This is Part 2 of our blog series on creating and using training data to build object detection models using deep learning. In Part 1, we discussed tips for labeling objects on images. In Part 2, we are going to cover tips on preparing and using training samples to build the best possible object detection models.

You apply these tips when exporting image chips from the Export Training Data for Deep Learning tool and when training the model using the Train Deep Learning model tool.

Here are our top 6 tips:

1. Use a Tile Size that covers your objects well

Choose a tile size that accommodates your objects sufficiently, while providing sufficient surrounding information for accurate detection. For object detection, Tile Size is determined by the spatial resolution of the imagery, the size of the objects to be detected, and the computational resources available. Smaller tile sizes are computationally efficient but may sacrifice contextual information. On the other hand, larger tile sizes capture more context, but require more memory and computational power.

Common practice is to choose a tile size that is large enough to capture the entire object of interest, while also providing enough context for accurate detection. When objects size varies a lot in your samples, one of the approaches can be to use three times the average object size. If your tiles are too big for the GPU, you can use smaller batch size.

2. Use a Stride with Tile Size, if applicable

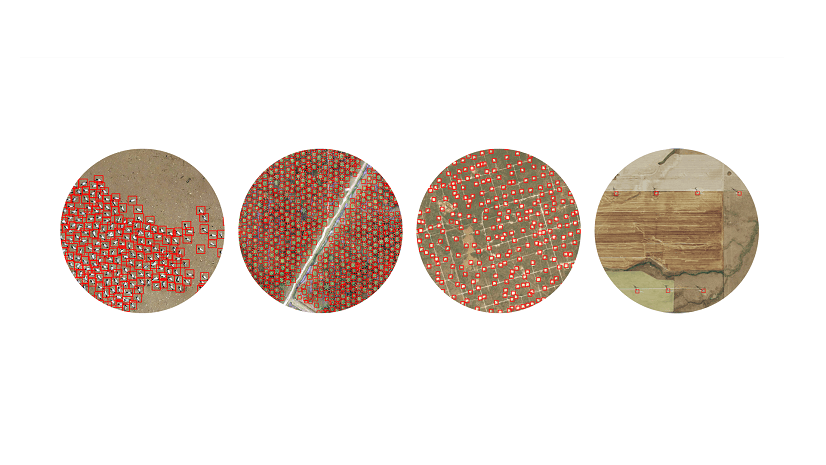

Choose a right stride value to control the overlap between image tiles. The tile size determines the size of each image chip, while the stride determines the step size between consecutive chips. Having overlap on your image tiles, when dealing with objects that span multiple tiles, can be beneficial for several reasons. For instance, it can help with reducing information loss, improving context understanding, and enhancing model generalization. When you export training samples, you can use the Stride Size parameter. It is the distance to move in the X and Y direction when creating the next image tile. When stride is equal to the tile size, there will be no overlap. When stride is equal to half of the tile size, there will be 50% overlap.

A smaller stride size can result in more overlap, but it increases the number of tiles and thus will require more computational resources. A common practice is to use a stride that is half the tile size. This results in 50% overlap between tiles, which is often a good balance between capturing spatial information and avoiding overfitting.

3. Use a Chip Size different from the Tile Size if necessary

Set Chip Size to override Tile Size. There could be cases when you are provided with large image chips, and you might want to reduce the size for training to use GPUs memory properly. One of methods to do this is to use the Chip Size parameter in the Train Deep Learning tool. The image chips are cropped to the specified Chip Size and Tile Size is not used. If the Tile Size is less than the Chip Size, Tile Size is used. Generally, your Tile Size and Chip Size should be the same.

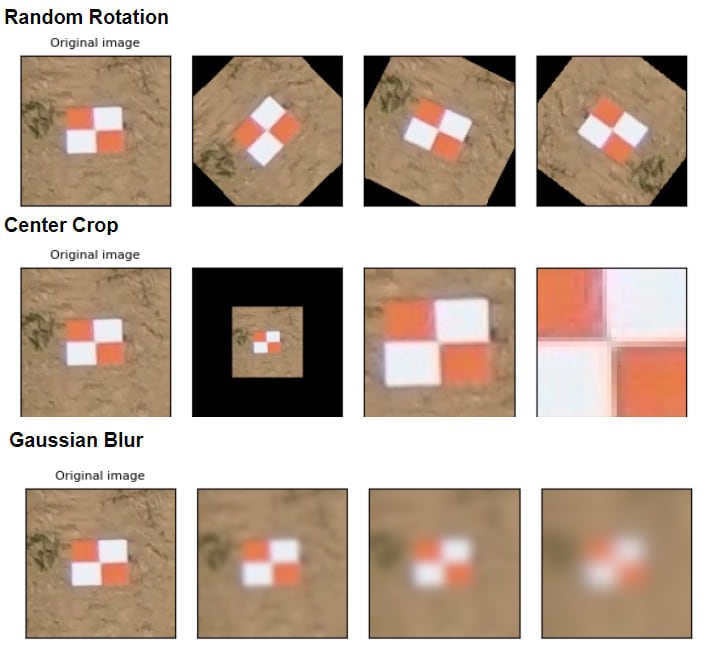

4. Use data augmentation

Leverage data augmentation to combat overfitting, especially when training on limited or homogenous data. Data augmentation is a technique to reduce overfitting when training a model. It involves artificially increasing the size of a dataset by randomly changing properties such as rotation, brightness, crop, and more of the image chips. The Train Deep Learning Model tool offers the capability to perform user-defined data augmentation for both training and validation data. You can choose from options such as default settings, no augmentation, customization of existing methods, or utilize a JSON file containing various data augmentation methods supported by vision transforms.

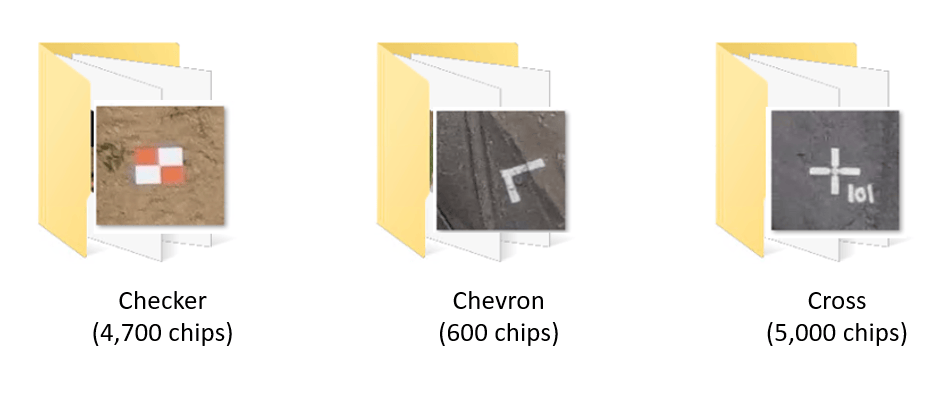

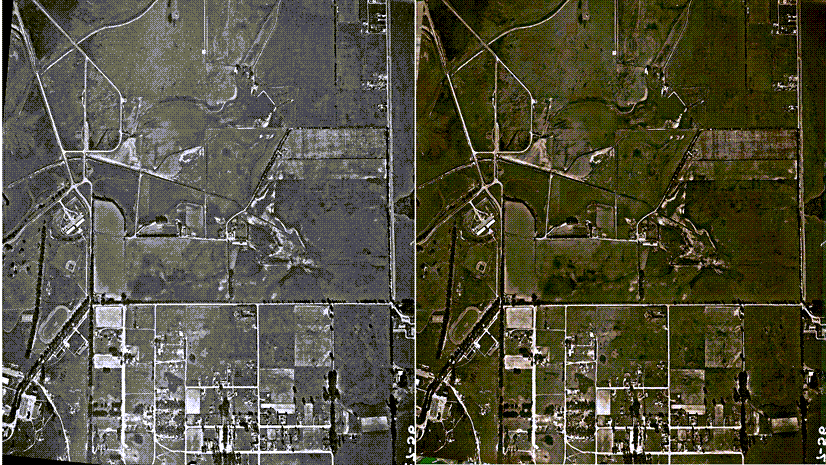

5. Address class imbalance

Balance your class distribution for optimal performance. Class imbalance in object detection is a common problem where the training dataset has many more examples of one class than others. This can cause the model to favor the more numerous classes and perform poorly on the less numerous classes. It’s advised to start by having about the same number of samples for each class. However, to address the imbalance, you can try adding more samples to the underrepresented classes, randomly duplicating samples from the minority class, randomly removing samples from the majority class.

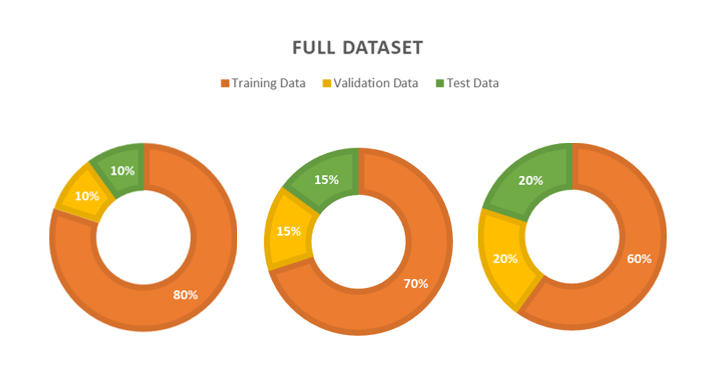

6. Divide train-validation-test datasets

Split your dataset into training, validation, and test sets to prepare for model training and evaluation. The training dataset is used to train the model. The validation set is to assess whether the model is overfitting to the training data. The test set is the dataset that the model has not seen during training and validation and is used to evaluate generalization ability of the model. The right balance for training, validation, and test sets for deep learning models depends on the specific task and dataset. However, a common practice is to allocate around 60-80% of the data for training, 10-20% for validation, and 10-20% for testing. For exceptionally large datasets, allocating 20-40% for validation and testing might be impractical. In such cases, even a 2-3% validation and test sets can be sufficient.

Conclusion

We hope these tips will allow you to build high class object detection deep learning models. Please note that these tips are general guidelines, and they may vary depending on your specific use case, characteristics of your data, and the deep learning model architecture you are working with. You should experiment with different scenarios and evaluate the performance of your models to determine the values and methods.

Article Discussion: