Update (5 November 2021): The CityEngine VR Experience 2021.1 for Unreal Engine 4.27 is available in the Unreal Marketplace. Follow this link to get the project template for free.

Virtual reality (VR) offers many exciting opportunities for urban planning: It allows city officials, planners, designers and citizens to immerse themselves in a virtual environment and view, discuss or modify possible development scenarios in ways that were not possible so far on regular computer screens or with physical models.

The CityEngine VR Experience is a complete solution for easily creating a premium VR application to explore your 3D city models and urban planning scenarios. It builds on a combination of ArcGIS CityEngine, which is used for data integration, 3D modeling and scenario development, and Epic Games UnrealEngine, which then renders the scene in real-time, controls the VR headset and its controllers, and allows for collaborative studies using multiple headsets over the network. The experience comes as a ready-to-use and extensible Unreal Engine project template, from which CityEngine scenes can be easily imported, configured and viewed.

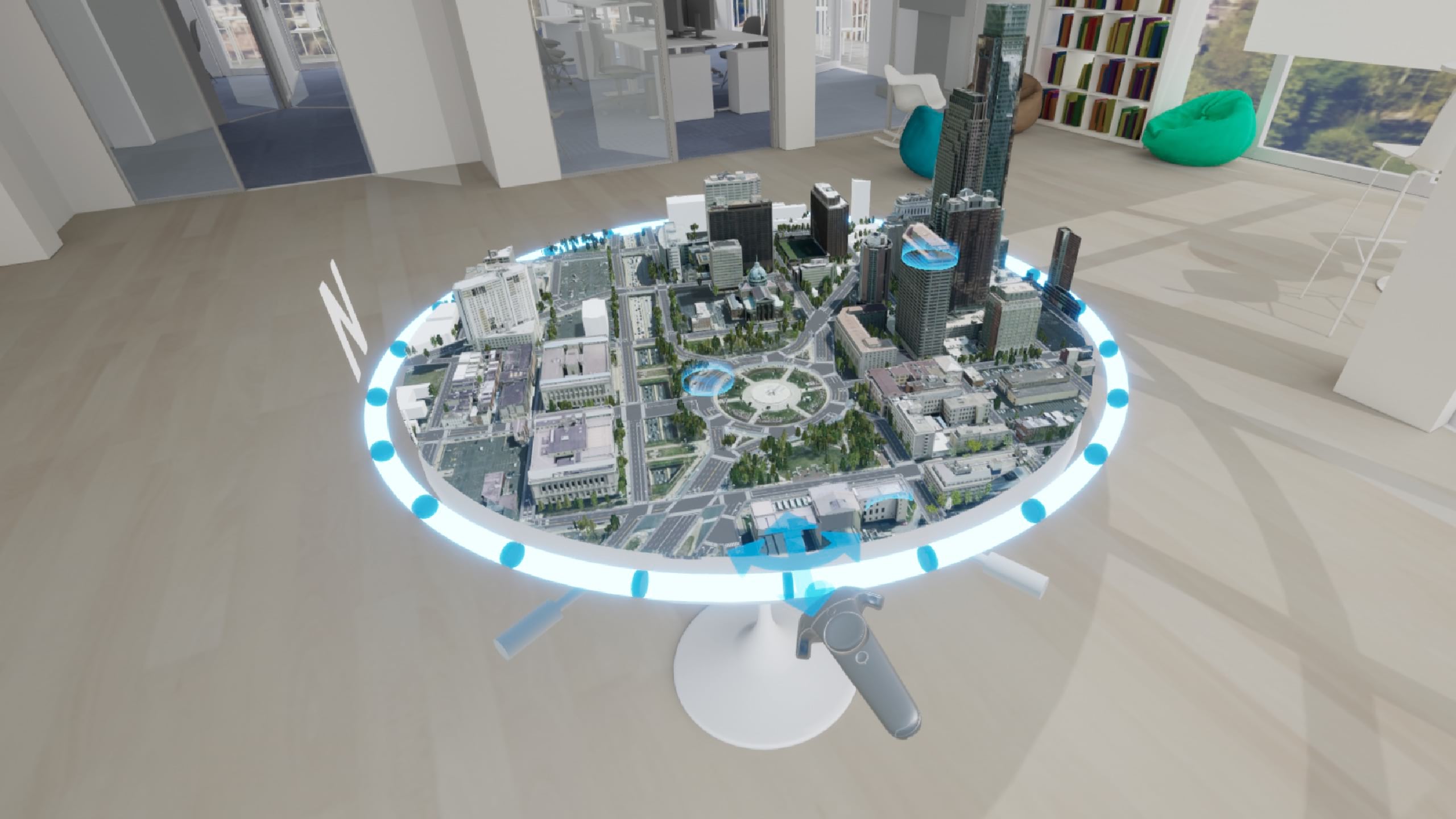

The basic idea of the VR experience is to create an environment that planners and other stakeholders are familiar with: The users initially finds themselves in a virtual planning office, with a nice 360° view, for example of their city (Figure 1). Centrally located in the office is a table with the 3D city model placed on top. In VR, users now can intuitively interact with the 3D city model to

- collaboratively review and compare multiple urban planning scenarios (using multiple VR headsets),

- interactively analyze the sun shadows throughout the day,

- teleport and immerse themselves into the 3D city model and view current situation and future scenarios at full scale.

Compared to the existing ArcGIS 360 VR solution for mobile headsets, which is based on static viewpoints, the CityEngine VR Experience offers a much more dynamic experience, where users can freely move around 3D city models and interact with them.

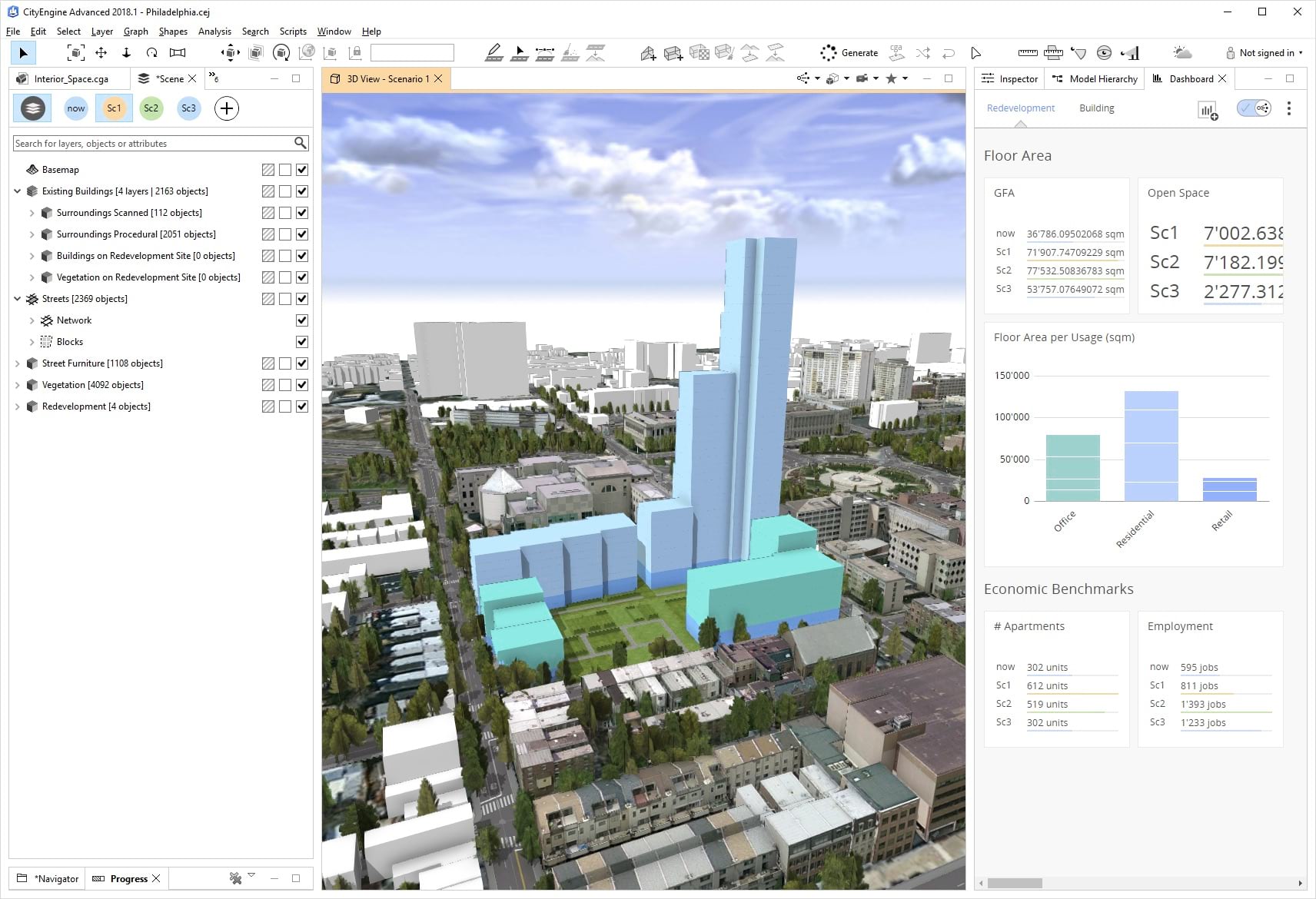

Urban Design with CityEngine

ArcGIS CityEngine is a procedural modeling tool that allows for rapid transformation of 2D GIS data into smart 3D city models (Figure 2). More recent versions of CityEngine increasingly incorporate dedicated tools for urban planners, such as the visibility analysis tools starting in CityEngine 2018.1. However, CityEngine is primarily a modeling and reporting tool and not optimized for high-quality real-time rendering. What is required for urban planning applications that target a broad audience is an extensible authoring environment where additional interaction and user interface features can be added, and self-contained software applications can be built. This is where game engines come into play since they satisfy all these requirements just mentioned.

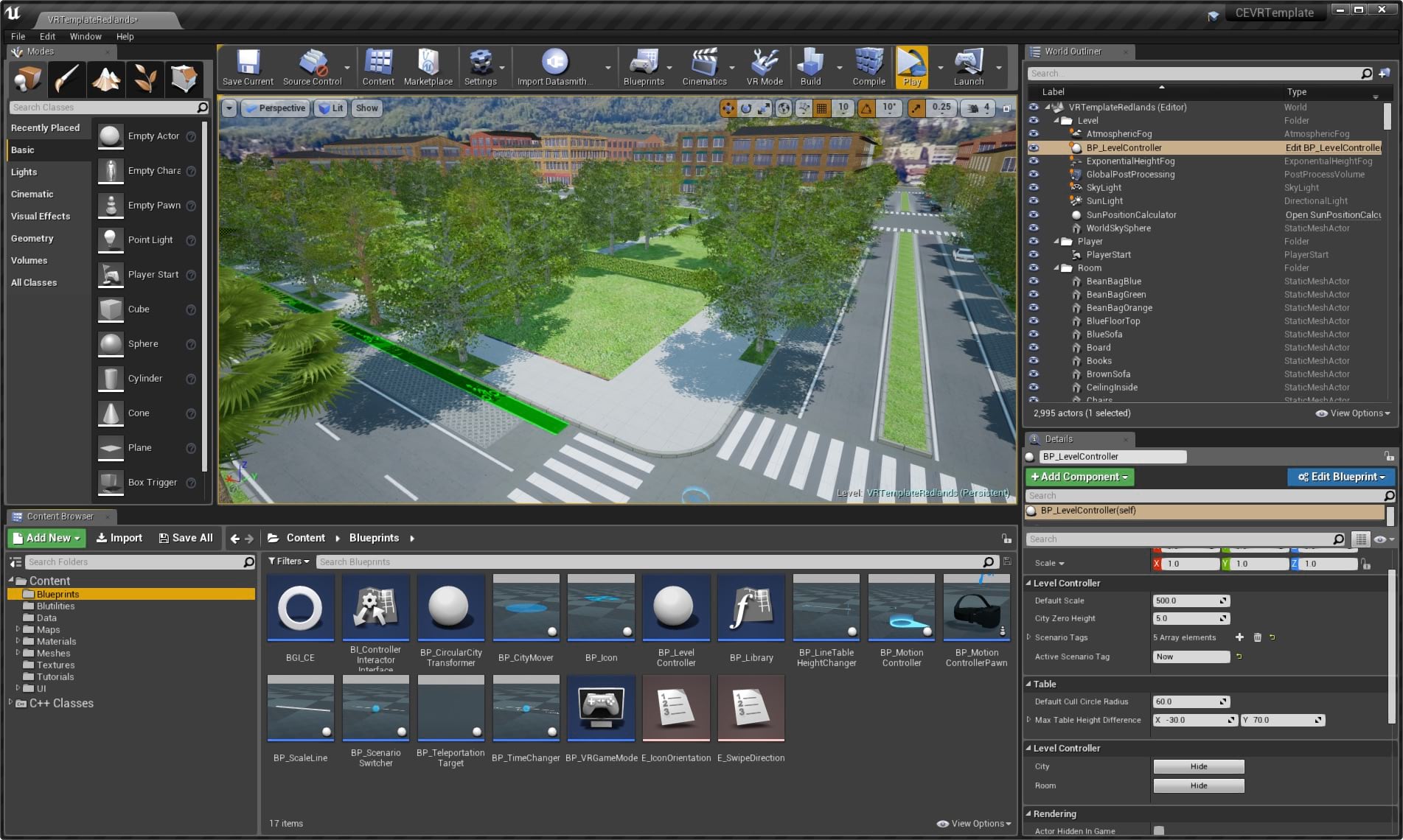

From CityEngine to Unreal Engine 4

In 2017, Epic Games announced Datasmith for Unreal Studio, the former enterprise suite based on Unreal Engine. Since Unreal Engine 4.24, Datasmith has been generally available to all Unreal users. Datasmith is a workflow toolkit that streamlines import of data into Unreal Engine and reduces tedious manual import conversion tasks to a minimum. With the release of CityEngine 2017.1, CityEngine has supported exporting city models using Datasmith, and the functionality has been continuously extended. Since then, customers have started making use of the CityEngine to UE4 workflow very quickly:

CityEngine allows us to model HOK’s massive urban planning projects. In the past, creating interactive high-end visualizations of several hundred thousand buildings was a challenge. Now, with the new CityEngine 2017.1, we can export directly to Unreal Engine. This enables us to craft fluid, data rich, real-time rendered experiences for our clients and stakeholders.

While this workflow can be used for creating a broad range of applications in UE4, it is also at the center of the CityEngine VR experience: Getting a model from CityEngine to UE4, and then embed it in an interactive planning environment with high quality rendering, and the appropriate navigation and interaction features (Figure 3).

Using the VR Experience

The users can approach the table and interact with the city model using a small set of basic actions: First, using the headset’s controller, the model can be panned, rotated and zoomed. As shown in Figure 4, this happens very easily by grabbing the ring around the table (for rotating and zooming) or by holding the controller above the model (for panning).

After identifying an area of interest and viewing it from an aerial view, the users can decide to immerse themselves in the model. This can either happen through predefined point-of-interest (PoI) indicators or by teleporting to any desired location. The users will then find themselves in the city and can move around (again through teleporting), can get on top of buildings to get a bird’s eye view, and so on. The point of being able to get right into a planning scenario is crucial to obtaining a sense of scale and to get an impression of specific views.

CityEngine supports modeling of multiple design scenarios within the same project, and these scenarios can be imported in the VR experience as well. Users can activate a menu by tilting their controllers (with a similar gesture to looking at a wristwatch), and from there select, switch and compare different design scenarios (Figure 5). Using the same menu, users can also change the time of day in order to inspect the effect of shadows thrown by tall buildings or by vegetation.

Future Work

Besides the aforementioned collaboration features, we are working on enhancing the VR experience in a number of directions: Game engines have very advanced sequencing and animation capabilities, which is a good opportunity to make the scenes more dynamic by adding pedestrians and vehicles. Also, foliage could be animated, such as moving tree branches in the wind. Another direction is to add support for different lighting and weather conditions. Finally, we are working towards adding new interactive tools, so designs can be changed during a planning session. With CityEngine’s procedural runtime, this can be achieved relatively easily, for example changing the height of a building, changing its appearance and so on. Ultimately, we are seeking to create a new generation of urban planning applications which can be used by experts and non-experts.

Availability and Links

The CityEngine VR Experience is available in the Unreal Marketplace: https://www.unrealengine.com/marketplace/en-US/product/98c99ed82ddd48f9bfdd1e98c311ce06

CityEngine VR Experience guide on Esri Community: https://arcg.is/0u0y11

ArcGIS CityEngine: https://www.esri.com/en-us/arcgis/products/arcgis-cityengine/overview

Unreal Engine: https://www.unrealengine.com

Commenting is not enabled for this article.