by Alison Wood, Graduate Student, The University of Texas at Austin

As GIS users, we often have to collect data from many sources and compile them into a single map. For just a few sources and a single map, this might be feasible. But what if you have to make a new map with updated data every day? Or every hour? Automation can save you the enormous time it would take to do that by hand, and also help to avoid the errors that can happen in repetitive tasks done by hand. In this blog entry, I’ll describe an example of automating a process to retrieve data, execute file format conversions, and update an online map; I’ll also talk a little bit about some of the tools and strategies I used that will be useful for someone else automating a similar process.

The Project

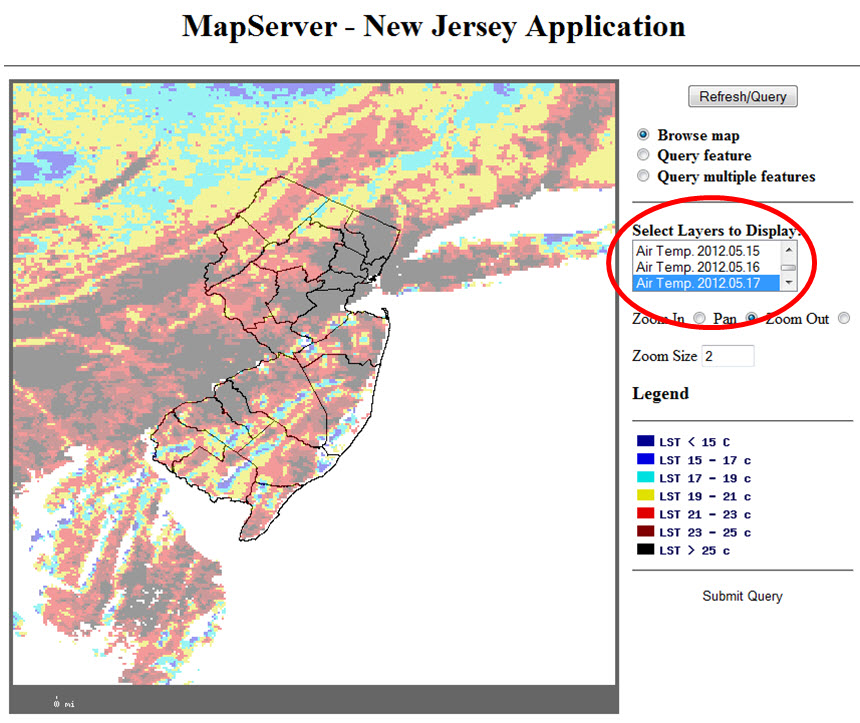

In the spring of 2011, Dr. David Hill’s research group at Rutgers University was working with online maps showing MODIS Terra satellite temperature data, along with other data from other sources. New temperature snapshots are made available daily on the USGS LPDAAC FTP server, so the online map needed to be updated daily. Rather than having someone waste valuable research time continually downloading files and updating the map, Dr. Hill had me write a series of scripts to do the job.

The Strategy

I decided to split the job into three major tasks:

- Get the files from the FTP server,

- Do the format conversions on the files, and

- Update the online map with the new files.

These just seemed like logical divisions of the work, and each could be a self-contained unit. If you’re not familiar with programming, you might find it helpful to know that this type of planning – before you start thinking in programming terms – is typically considered a good way to start working on a computer program or script.

Continuing with that pre-programming process, I wrote out the specific steps I would take if I were going to get the files, convert them, and update the map, all by hand. Once I understood the steps, I began to look for scripting tools. (I already knew I would be scripting in Shell in a Linux environment, because that’s standard for our group.)

The Key Tools

The first key tool I found was the wget command. It’s a powerful Shell command that allowed me to make a partial mirror of the FTP site on a local computer. Mirroring helped take care of the problems of making sure the local copies of files were up-to-date by comparing time stamps of local files with those of the files on the server.

The next set of tools that were crucial in making this process work came from GDAL – the Geospatial Data Abstraction Library. This library has commands already written that can ingest certain file formats and output GeoTIFFs and shapefiles. (GDAL can also be used with other programming languages, such as Java, Perl, and Python.)

The last really important tool I discovered was the sed command. Sed is a stream editor, which means you can direct the computer to read through a file and make changes to the file’s contents as it reads. The upshot is that it’s easy to delete, add, or replace text in a file. Sed allowed me to rewrite the files that underlie the web map with references to the newest shapefiles of temperature information.

Putting these commands together and filling in the rest of what the scripts needed to do was a long and often painful process, as programming tends to be for beginners, but it was worth it. In the end, we added the scripts to the server’s cron (which runs programs on the computer automatically at predetermined times), and now the online map is automatically updated with the seven most recent days’ data available from MODIS Terra.

One More Tip

Make friends with programmers. I knew a Shell programmer I could call with questions and he was an invaluable resource in putting these scripts together. Even if you’re a good programmer in one language, having a friend who knows the language you’re less well versed in can be a great help.

For further details or copies of the scripts, email me at alisonwood@utexas.edu. To see the online map being updated by these scripts, visit http://gaia.rutgers.edu/Mapserver/NJ. I’d like to acknowledge Dr. David Hill, his research group, and Rutgers, the State University of New Jersey, for making this project possible.

Special thanks to Alison Wood for providing this post. Questions for Alison: AlisonWood@utexas.edu

Article Discussion: